Thanks for all your visits/comments to this blog and we’ll meet again after three weeks. With OpsMgr R2 there will certainly be a lot to blog about.

Bye,

Marnix

Thanks for all your visits/comments to this blog and we’ll meet again after three weeks. With OpsMgr R2 there will certainly be a lot to blog about.

Bye,

Marnix

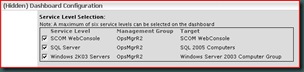

As a result, the wrong Service Level will be displayed.

As KB971406 states: ![]()

Hopefully a hotfix will soon come out to address this issue.

KB971233

This hotfix solves an issue where the console shows customized subscriptions. These subscriptions resemble the following under the Administration\Notifications\Subscriptions\Subscription Name node: SMTP{GUID}

This hotfix contains a sql-script which must be run against the OpsMgr database.

Read the instructions carefully in this KB before applying it.

KB971504 tells all about it.

(The same hotfixes are needed as well when running OpsMgr SP1 on Windows 2008 Server)

Check it out: http://support.microsoft.com/?kbid=971410

When in doubt about a certain upgrade path, post your question on the OpsMgr Online Forum BEFORE starting the upgrade. http://social.technet.microsoft.com/Forums/en-US/category/systemcenteroperationsmanager

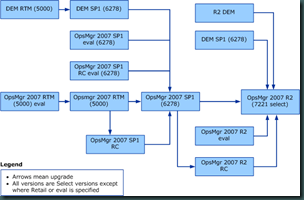

From OpsMgr SP1 to R2 RTM

When the OpsMgr environment is at SP1 level you can upgrade directly to R2.

From OpsMgr RTM to R2 RTM

Sometimes I bump into OpsMgr environments which are still at RTM level. These environments can't be upgraded directly to R2. First SP1 needs to be applied before an upgrade to R2 is possible.

From OpsMgr R2 RTM Eval to R2 RTM

You can upgrade directly to R2 RTM full version.

From OpsMgr R2 RC Select R2 RTM Eval

Not possible. Wait for the R2 RTM Select to perform an inplace upgrade. Look here for the information from the community.

Diagram of available upgrade paths

Tasks to be completed BEFORE the upgrade

Speeding up the upgrade

Nice thing is to run a stored procedure on the SQL-server hosting the SCOM-databases, which will speed up the upgrade process:![]()

Running upgrade using RDP connections

When the upgrade is done on the servers through a RDP session it is important to run it with the switches which makes it a Console session. Otherwise the logfiles – made during the upgrade – will be lost when the system restarts.

Upgrade order

And - if applicable - the ACS Collector with the ACS-database

Special thanks to Graham Davies who warned for some pitfalls.

Be aware though that with OpsMgr R2 the MP guide is still needed. Read this posting how to go about it.

Mostly because the MPs are packaged as a msi-file. When one runs this file the local msi-database gets filled up with all kind of unneeded information about installed programs which are mostly unpacked MPs.

With a free MSI-editor/-unpacker/-viewer the installation of the msi-file isn’t needed. Simply run the MSI-editor/-unpacker/-viewer, open the msi-file and in the same folder where the msi-file resides a new folder will be created, containing all files which are to be found within the msi-file, without filling-up the local msi-database with all kinds of unneeded information.

This free tool can be downloaded here. I have used it on Windows Vista without any problems and now I use it on Windows 7 as well, also without any problems.

Reports can be created as well, including the power consumption for each computer or for a group of computers.

The monitored system must be a Windows 2008 server R2 or Windows 7 client. These systems must be attached to a PDU (Power Distribution Unit) as well.

Before this feature is available in OpsMgr R2, the Power Management Library management pack must be imported. With this MP a new type of monitor comes available: Power Consumption.

With this monitor the Power Consumption can be monitored.

I hope to be able to test it. As soon as I get some results I will post them on my blog.

Here are the locations where the documentation can be found:

Check it out here.

Therefore I have made a small overview of the available OpsMgr versions:

By running a sql-query (*) on the OperationsManager database the OpsMgr version can be easily found:

select DBVersion from __MOMManagementGroupInfo__

(*: This query - with many other useful queries - can be found in this blog article of Kevin Holman.)

today the RTM of OpsMgr R2 is released.

Fast facts:

On Microsoft Connect more information can be found as well.

It tells how to configure a Gateway Server to communicate with a different Management Server.

I tested it in a testenvironment of mine and it works great.

Article to be found here.

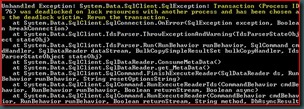

Microsoft has just released a new hotfix for OpsMgr SP1:

KB968082

This hotfix solves an issue with the Auto Agent Assignment option in OpsMgr. When this is used in an AD domain with a name that starts with a digit, this errormessage is shown:

XSD verification failed for management pack. [Line: 53, Position: 13]

The Notifications Update Alert History Tool.

Also a must-have.To be downloaded here.

The Notification Test Tool.

To be downloaded here.

Beware. The tool mentioned here is to be used at your own risk. This is provided "AS IS" with no warranties, and confers no rights.

At a customers site AEM was in use. However, the customer decided not to use it anymore. So AEM had to be disabled.

The first steps in this process are pretty straight forward. The GPO which tells the client to forward the events to server responding to the AEM requests, has to be disabled.

Then the SCOM Management Server(s) running this feature (Client Monitoring) have to be adjusted. In the SCOM Console go to the Administration pane, Management Servers. Right click the Management Server where this feature is enabled and select ‘Disable Client Monitoring’.

Now one tends to think all is well. As a matter of a fact it is. But when one opens the SCOM Console, Monitoring pane and checks the folder ‘Agentless Exeception Monitoring’, ‘Application View’ a lot of data will be present in this View.

This is because most data is still in the Data Warehouse, and it will stay there for a long long time by default. (raw data 30 days, aggregated data 400 days).

How neat would it be when this data, and ONLY this data could be groomed out much earlier.

Running this query against the OperationsManagerDW database shows these default settings (Thanks to Kevin Holman for providing this sql-query):

Query:

SELECT AggregationTypeID, BuildAggregationStoredProcedureName, GroomStoredProcedureName, MaxDataAgeDays, GroomingIntervalMinutes

FROM StandardDatasetAggregation WHERE BuildAggregationStoredProcedureName = 'AemAggregate'

Output: ![]()

Where the grooming settings for the OpsMgr database are easily to be adjusted by the SCOM Console, this is not the case for the Data Warehouse database.

So after a bit searching I found this tool made by Daniel Savage.

And after some testing (in one of mine SCOM test environments) I changed these settings to 1 day (raw data) and 10 days (daily aggregations).

Now the output of the earlier mentioned sql-query shows this information: ![]()

This is way much better. The AEM data will be gone within two weeks!

Thanks to Daniel Savage for the tool and Kevin Holman for the query.

When the Active Directory Auto Agent Assignment feature of System Center Operations Manager 2007 is enabled, event 11470 is logged every hour on the Root Management Server. This symptom occurs because the <Server>_PrimarySG_<number> security group is repopulated when Active Directory is requeried.

KB967843 describes this issue and has a hotfix for download.

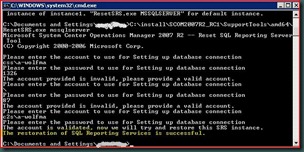

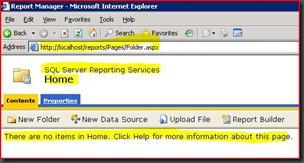

The installationmedia for SCOM has a tool for which brings SRS back to it’s state before SCOM Reporting was installed, ResetSRS.exe, located in the folder ~\SupportTools\<systemarchitecture>\.

Just running this tool is not sufficient. Afterwards other steps must to be taken as well, like running the installation of SCOM Reporting again (make sure to connect to the ‘old’ Data Warehouse database !).

However, running this tool might reveal other issues at hand as well. For instance this message might show up when running this tool:

First check the database (ReportServer) on the SQL-server hosting this database. Big change this database has entered Single User mode: ![]()

But just running this query ‘ALTER DATABASE ReportServer SET MULTI_USER’ to make the database Multi-User again will not work. Before running it make sure to stop the related SRS service. Now this database will accept this query.

Now run the ResetSRS.exe tool. When all is well this output will be generated:

Now open IE on the SRS server and type in this url: ‘http://localhost/reports’. When all is well this will be shown (it can take a while before it is loaded since the Application Pool has been restarted):

Now the basis (SRS) for SCOM Reporting is Up & Running again and the other actions to make SCOM Reporting work again can be taken.

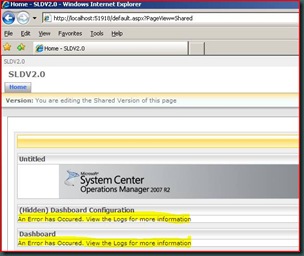

There is much to tell so let’s start. For the answers of the questions I have used the SLD document a bit.

Question 1: What does it add compared to the default solution already available in SCOM R2?

This solution is built on Windows SharePoint Services 3.0 and designed in such a way to work in conjunction with SCOM R2, configured to monitor business critical applications. When the SLD components are correctly configured and operating, the dashboard displays – almost real-time at 2 to 3 minutes (!) – summarized data about the service levels.

So this solution combines the strengths of SharePoint and SCOM. Besides that, no more need for a manager to request access to SCOM in order to know whether the SLA’s are being met, since the measured results are available in SharePoint.

Question 2: How does it work?

In SCOM one defines the service level goals (named Service Level Objectives, or SLOs in SCOM) against an application or group of objects. Here the service level targets are set as well.

The SLD evaluates each SLO over a defined time period and decides whether it met its goal or not.

The dashboard displays each SLO and identifies its states based on the defined service level targets. The dashboard can display a maximum of six different applications or groups.

Question 3: What does the dashboard summarize?

Process Flow of the SLD:

(picture taken from SLD document)

Requirements

Of course a working SCOM R2 environment is needed. Besides that:

Quick Guide

Here is described how this solution is installed, configured and some SLOs are built and displayed in SharePoint. This quick guide presumes SharePoint 3.0 is already present, configured and in working condition.

![clip_image002[5] clip_image002[5]](http://lh6.ggpht.com/_9WdQG0JZ7go/Sg07y3XBbrI/AAAAAAAAA9k/ykXpeSutYRQ/clip_image002%5B5%5D_thumb%5B1%5D.jpg?imgmax=800)

Conclusion

With this new solution – even though it is still in beta – Microsoft has shown its dedication to SCOM/OpsMgr. It delivers added value for many organizations and combines the strength of SharePoint Services 3.0 and SCOM R2.Now managers can see – almost real-time – how the Business Critical systems/applications are performing and whether they meet the SLAs.

With this a good tooling has been brought to the market which enables businesses to get a good insight of their processes and their weakest links.

Even though this solution is rather easy to implement one must realize that the most of the time needed to make this work is a good translation of the SLAs to the SLOs in SCOM/OpsMgr.

Otherwise one is looking at a nice dashboard but getting wrong information.

Therefore preparation is the keyword for which most preparation must be done at the organizational level instead of the technical level. Only than this solution will live up to its promises.

Since this SLD is tightly integrated with SharePoint, it can use it’s security as well. Therefore the dashboards can be separated from each other. So customer X can only see his/her related dashboard and customer Y can only see his/her dashboard.

For companies using SCOM as a hosted service, this is a huge advantage. Their customers can now see how their systems are performing.

Special thanks

It took me a while to get everything working. As stated before in an earlier blog posting of mine, this wasn’t because of the solution but because of problems within my SCOM test environment and a shortage in my knowledge of SharePoint.

The Program Manager for this solution, Raghu Kethineni, has been of great help to me for making this work. Thanks Raghu!

Finding the reason behind this never ending discovery process could take some time. Mostly it turned out that the SQL Broker Service wasn’t running. And this service is needed for the discovery of servers/clients.

In SCOM R2 Microsoft has addressed this issue. Yes, the SQL Broker Service still needs to be in a running state but now the wizard shows this screen:

So when a discovery keeps running on with no end, one now knows what it can cause. Of course there might be another reason for it but more than 90% of the time, the SQL Broker Service – not running - is the culprit.

Even though it is nothing more than a small cosmetically change, it can be a real timesaver for troubleshooting.

None of them related to the solution itself but to my experience with SharePoint (not present), and a test environment being a bit problematic.

But after a total rebuild of the test environment (DC, SQL, IIS, RMS, SharePoint) I got the SLD solution Up & Running.

All I have to do now is to build some SLA’s to monitor in OpsMgr and adjust the SharePoint Portal website for the SLD.

As soon as I get results I will post about it.

KB971285 describes how to solve this problem.

This package will most certainly contain most post SP1 QFE’s/Hotfixes.

Among those QFE’s also some changes will be added as well. Since I am not sure what those changes exactly are going to be and I do not like to guess all I can say is to wait until more information gets out.

However, this posting will be about the document accompanying the MP Authoring Console.

Why? Well this document tells exactly what a MP is all about. The way it is constructed, how MPs implement models for monitoring. What classes are and their related attributes. What a Service model is and the available relationships. And so on.

So somehow this document is like ‘Everything You Wanted to Know About MPs (But Were Afraid to Ask)’…

A good read for everyone working with SCOM on a daily basis and wants to know more about what makes SCOM tick.

The document for the MP Authoring Console for SCOM SP1 is available for download, to be found here.

What happens is that SCCM has a mechanism in place which backups SCCM installation folder of that server. It pauses the SCOM Agent Health Service for a short while (a minute or so).

This event in the OpsMgr eventlog will be shown:

Mostly this service is resumed within a minute, so SCOM will not Alert on it. Sometimes however the backup-process lasts a bit longer and this Alert will be shown in the Console:

Even though the Agent Health service is suspended (Maintenance Mode), an Alert will be raised. This is because the Agent Health Watcher is still running and that one will fire off an Alert:

For more information about Maintenance Mode, check this posting of mine.

The simpler a rule/monitor is, the better it works and the longer it will be used.For the department which got the most rules/monitors I decided they would get the Priority High and the same way would the rules/monitors be set as well.